- University of Southern Denmark (SDU) to use ─ó╣Į╩ėŲĄ Helios, supported by the Danish e-Infrastructure Consortium (DeiC)

- Access to Helios enables SDU to test and refine fault-tolerant algorithms and error-correction codes under realistic hardware conditions

- The collaboration supports at a scale of 48 logical qubits, positioning Denmark at the forefront of scalable, practical quantum computing

- Researchers exploring the scientific foundations for future development of applications in fields including pharmaceuticals, finance, and defense

Progress in quantum computing is measured by hardware advances plus the algorithms and quantum error-correction codes that turn quantum systems into useful computational tools.

Thanks to recent hardware advances, researchers are increasingly sharpening their tools to probe the performance of quantum algorithms and understand how they behave in realistic conditions ŌĆō where stability, system architecture and algorithm design all shape performance.

A new Denmark-based collaboration between the University of Southern Denmark (SDU), ─ó╣Į╩ėŲĄ, and the Danish e-Infrastructure Consortium (DeiC) will utilize ─ó╣Į╩ėŲĄ Helios. Researchers at the SDUŌĆÖs Centre for Quantum Mathematics, led by J├Ėrgen Ellegaard Andersen, will use Helios to pursue research into topological quantum computing.

Their work could help explain how and why successful quantum algorithms perform as they do, informing the development of high-performance algorithms suited to emerging quantum systems. TheyŌĆÖre exploring the scientific foundations that support future quantum applications across areas including pharmaceuticals, finance, and defense.

ŌĆ£We are thrilled to gain access to ─ó╣Į╩ėŲĄŌĆÖs high-fidelity Helios system. This collaboration gives us a unique opportunity to test the limits of our algorithms and evaluate system performance, while advancing fundamental research and laying the foundation for future applications.ŌĆØ

ŌĆŹ

ŌĆö Professor J├Ėrgen Ellegaard Andersen, Director of the Centre for Quantum Mathematics at University of Southern Denmark

Why topological methods matter

Topological quantum computing is an area of research that connects quantum computation with deep mathematical structures. It includes the study of error correcting codes known as surface codes that encode quantum information in the global properties of systems of logical qubits.

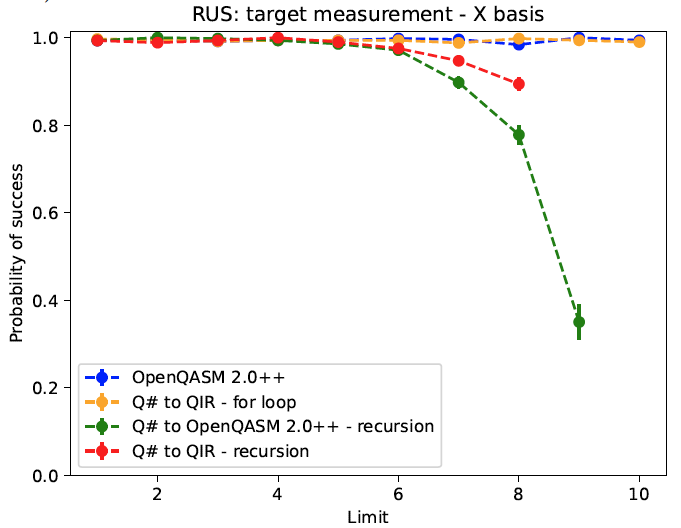

The research team will explore how these codes behave, and how they may support the development of fault-tolerant quantum algorithms in practical implementations under realistic conditions.

This distinction between theory and practical implementation matters. In theory, topological approaches offer a rich framework for designing algorithms and error-correcting codes. In practice, researchers need to understand how those ideas perform when implemented on real systems, where questions of noise, stability, overhead, and scaling become central. The collaboration will allow the SDU team to investigate these questions directly.

New ways to benchmark quantum processors

Beyond individual algorithms and codes, the research will also develop tools for benchmarking quantum processors. The goal is to develop new ways to characterize fidelity and stability in regimes that can be difficult to access.

The team will also explore hybrid quantumŌĆōclassical approaches, including machine-learning techniques assisted by quantum hardware, to study the mathematical structures at the heart of topological quantum computing. This work reflects a broader field of research in which quantum and classical methods are used together, each contributing to parts of a computational problem.

Strengthening DenmarkŌĆÖs quantum ecosystem

The collaboration reflects the growing role of national quantum infrastructure in supporting research and talent development. Denmark has a long tradition of scientific innovation, and this collaboration is intended to support the countryŌĆÖs continued development in quantum technology.

The initiative is supported by DeiC, which played a central role in securing funding and enabling access to ─ó╣Į╩ėŲĄŌĆÖs systems. DeiC has been assigned a particular role in developing and coordinating quantum infrastructure initiatives for the benefit of universities and industry, operating without its own commercial, sectoral, or geographical interests. This includes securing dedicated access to quantum computers, producing advisory services and supporting the development of new talent in the Danish quantum sector.

ŌĆ£DeiCŌĆÖs special effort to secure funding and access for this research initiative is rooted in our organizationŌĆÖs role in relation to the Danish GovernmentŌĆÖs strategy for quantum technology.ŌĆØ

ŌĆŹ

ŌĆö Henrik Navntoft S├Ėnderskov, Head of Quantum at Danish e-Infrastructure Consortium

This collaboration promises to accelerate the development of practical algorithms. It is grounded in fundamental science ŌĆō but its focus is practical: discovering and testing mathematical approaches to topological quantum computing that can be implemented, evaluated, and improved on real quantum hardware.

That work requires both theoretical insight and access to a system such as Helios capable of supporting meaningful scientific work.